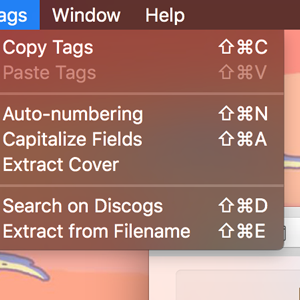

This involves transmitting the smaller to-be-joined table to each executor’s memory, then streaming the larger table and joining row-by-row. Increasing the value of this setting will increase the likelihood that the Spark query engine chooses the BroadcastHashJoin strategy for joins in preference to the more data intensive SortMergeJoin. The following sections will discuss this technique in more detail. However, this will likely not help when one or relatively few keys are dominant in the data. Increasing the number of partitions data may result in data associated with a given key being hashed into more partitions. Techniques for Handling Data Skew More Partitions I will stop losing sleep over this and go with the data-processing literature usage of the term.

Perhaps a better term to use instead of ‘skewed’ would be ‘non-uniform,’ but everyone uses ‘skewed.’ So, fine.

So, our data is not ‘skewed’ in the traditional sense, but definitely unequally distributed amongst our partitions. But clearly in this case the mean (average), the mode (most common value), and the median (the value ‘in the middle’ of the distribution) would all be the same. Then, if we are using a hash or range partitioner, all records would be processed in one partition, while the other 99 would be idle. Many online resources use a conflicting definition of data skew, for example this one, which talks about skew in terms of “some data slices more rows of a table than others.” We can’t use the traditional statistics definition of skew if our concern is unequal distribution of data across the partitions of our Spark tasks.Ĭonsider a degenerate case where you have allocated 100 partitions to process a batch of data, and all the keys in that batch are from the same customer. Statistics defines a symmetric distribution as one in which the mean, median, and mode are all equal, and a skewed distribution as one where these properties do not hold. This post presents the results, but before we get to those, I could not restrain myself from some nitpicking (below) about the definition of ‘skew.’ You can quite easily skip the next section if you just want to get to the Spark techniques. After reading a number of on-line articles on how to handle ‘data skew’ in one’s Spark cluster, I ran some experiments on my own ‘single JVM’ cluster to try out one of the techniques mentioned.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed